I've had the privilege of working on some amazing CTFs (Capture the Flags) in my career. From building internal ones, to delivering them for companies at cons or being part of the team running them at community events. Making challenges and seeing people learn from them is something that brings me great joy. There is a pretty insane amount of effort that goes into a good CTF. Coming up with challenges, spinning up reliable infrastructure for hundreds or thousands of people to use and wrangling a bunch of volunteers takes patience and perseverance and hundreds of hours usually crunched into a few weeks/days/hours prior to the event.

CrikeyCon 11 was no exception. We had big dreams about starting earlier but soon found ourselves working right up to the bell (even pushing challenges during the event). In a lot of ways I probably wouldn't change a thing about that, pressure makes diamonds.

As usual I want to thank the challenge writers (you guys rock) and my fellow organisers and volunteers (you know who you are). Your continued dedication and hard work is so impressive and something you should be proud of.

Without further ado, here is my retrospective for the CrikeyCon 11 CTF.

The ai-lephant in the room

For those that didn't attend CrikeyCon this year or attend the closing ceremony (where were you!?) you missed what could be considered a reasonably large disruption within the CTF space: AI. Now to most CTF attendees or organisers this isn't a surprise: AI is pretty well equipped to aid competitors. This year however, it definitely exceeded just aiding. We had our first competitor to simply authenticate Claude Code with their CTFd cookies and prompt it to complete the CTF. There was a lot more to it and I won't steal that competitor's thunder and will share/link them once they have written it up for themselves. For clarification we (the organisers of the CTF) were aware of this and largely saw it as a reasonable experiment. The surprising (or perhaps unsurprising to me) outcome was that this competitor won. The winning competitor gracefully passed on their first prize to the non-agentic winner. The agent basically flattened our CTF completing 79 out of 80ish challenges including access control challenges but excluding lock-picking challenges.

As with any disruptive technology there are different schools of thought as to what this means and I want to capture some of them for posterity.

CTFs are dead

This was probably the most common reaction from challenge writers. Writing unique challenges is hard and there is an expectation that people will put effort in to solving them. It's a natural reaction, one of the greatest rewards from writing a challenge is when you absolutely nail it. Nailing a challenge has a few different forms:

You make a challenge that gets solved by 1 team

You make a challenge that fits its category and difficulty rating perfectly

You make a challenge that is unique and quirky and absolutely frustrates the hell out of a team/player

All of these are what I personally consider success when writing a challenge. Sometimes your challenges are meant to be solved (especially 101 challenges), sometimes they aren't meant to be solved and sometimes you just really want the winning team to beg you for the answer.

The outcome on Saturday takes away all of these rewards. If your challenge can be solved by AI then every team has access to it, therefore point 1 doesn't apply. Point 2: the category and difficulty doesn't matter. And point 3? Well, Claude doesn't complain. This leaves less incentive for challenge authors to create challenges which then leaves the CTF scene less vibrant and interesting.

CTFs are going to have to change but I am not quite sure how

The other reaction was: hmmm, what do we do? I call this the what's next reaction. Essentially these people acknowledge the coolness of the technology and are also not sure how we can incorporate it. I think this is probably the second most popular viewpoint. They see that there is a future but aren't quite sure about how we get there. In some cases there is a feeling of not wanting to own the future. This is something I saw in some CTF players and challenge writers as well.

Wow this is cool / Wow this is scary

Pretty self-explanatory. Most people fall into this category. They are either excited or afraid. Either one is a perfectly natural response. I have a post I am cooking up about this topic so I won't spend too much time on it.

So what does the future of CTF look like?

These are just my thoughts so take them with a grain of salt.

We probably need two streams of CTF: a classic CTF and an agentic centric CTF

The traditional CTF still has a place and I think it should continue. The reason I say this is that the core benefit for participants (aside from Lego and bragging rights) is learning. We have a responsibility to learners as CTF writers to keep giving them a place to play and learn. That being said we will probably have to begin to take a harder line towards AI within these competitions and rethink our challenges a little bit.

During the CTF we offered prizes to people that would come up and admit/show us that they were using AI. We had at one point a line of 12 people waiting to show us laptops open with ChatGPT and Claude windows on display. AI is inescapable so we need to think about how we can account for that. This is going to take time and creativity.

The second stream is an agentic centric stream. I have some thoughts about what this might look like.

Agent jungle gym

It has long been a desire of myself and other CrikeyCon peeps to bring back a form of the Red vs Blue CTF challenge. These are dynamic attack and defence challenges that more closely imitate the way in which attackers and defenders work in a network. I think the outcome of this weekend just past justifies that. I've sketched out something I like to call the jungle gym. It's a hybrid between red vs blue and a king of the hill challenge with a bit of a twist: agents only, or a combination of agents and humans.

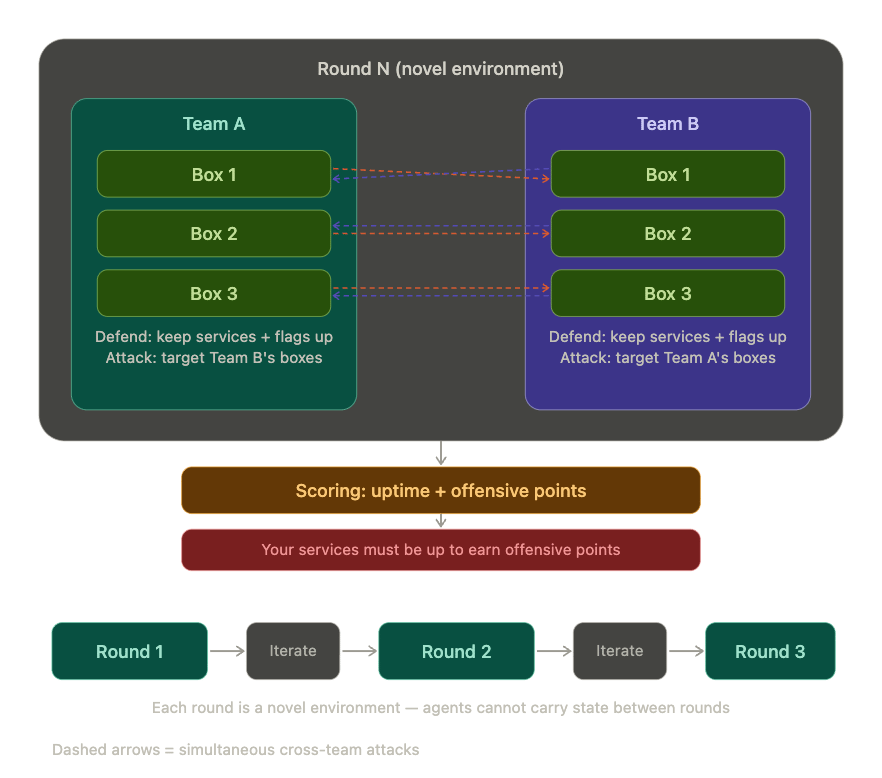

Essentially there are three rounds. At the beginning of each round you get to submit your agent/agents. That agent is presented with a series of boxes they are tasked with protecting and a series of boxes they are tasked with attacking. The boxes they are protecting have services and flags that must remain available; this is a prerequisite to score offensive points. Points are awarded for keeping your services available and taking down other teams' services. Each round is a novel environment and in between rounds competitors get to work on their agentic approaches before the next one starts.

This creates an interesting and dynamic environment for players and observers and also removes the inherent edge of AI. I am also exploring how to showcase what the agents are doing, and as to if it should be run by a form of AI dungeon master that handles injects. It would also be interesting to see if a team for example decided to not use AI and compare their placing to others. This is still early stages and we are a fair way out from it. My soft goal is to run an online version of this sometime in the later half of this year. If you're interested in taking part reach out to me, I will be writing and sharing more about this soon.

I will also be seeking sponsors to help pay for tokens as well so that there isn't a financial barrier to participation. If you've got someone that can help with that please point them in my direction.

AI has benefits for challenge creators

Writing challenges is time consuming. There are a lot of ways that we as challenge creators can use agents to improve this process.

I experimented a fair bit with agents this year and have the following observations:

Agents make much better UI/UX than we would normally, especially in web challenges

UI/UX historically hasn't been part of the challenge space. A lot of people that write challenges are not actively incentivised to provide a good UI for something that will be used for one day. Agents change this a bit. Having an LLM create a functioning user interface that goes over the top of your web challenge can be extremely powerful. This allows for much better visual storytelling and theming as well. We had some genuinely unique and interesting challenges that were greatly enhanced by the interface we could put on top of them.

Agents can add dynamism to challenges

Last year I experimented with agentic challenges by creating an IRC server that was populated with LLMs. Each LLM had different levels of protection from prompt injection and had a flag they needed to protect. This year we initially didn't have any LLM challenges, but during the day I set myself a challenge to use an agent to build an agentic challenge and push it live before the day ended.

The result was a challenge called slop-machine. Essentially, you pulled the lever and you got a random challenge displayed within an iFrame on the page. This challenge was live vibe-coded by an LLM (qwen3.5-35b-a3b, an open-source model) immediately after you pulled the lever and presented to you without a human reviewing it. Topics for challenges were mostlyweb and crypto, obviously limited by the implementation. There was an element of luck to the challenge in that you had a much greater chance of being presented with a challenge you literally could not solve (either because the LLM just borked it or the answer was wrong).

It was interesting to watch. Some people came up and asked why it was so easy, while I watched others pull and pull the lever getting perpetually wrong challenges. It's fun watching the non-deterministic nature of LLMs play out in real time. Dynamic interaction is a really powerful means of making an engaging and interesting challenge and I've got a lot more ideas that I will be bringing to future CTFs.

Agents are generally pretty capable of handling your 101 challenges

101 challenges are a must for any CTF, especially for Crikey. A lot of attendees are students or people new to the industry. These challenges are all very similar each year but still need to be slightly different and fit the yearly theme. These make up a reasonable portion of challenges each year and are still time consuming. Agents are great at making these (with a bit of prodding). So if you're building 101 challenges, agents are your friend. Avoid trying to one-shot multiple at the same time. I tried a mix of both and the one-shot multiple challenges were extremely unreliable. Use planning mode and be clear about what you want.

Agents are better at solving challenges than writing them

In a similar vein, agents are much better at solving challenges currently than writing them. Agentic output is inherently derivative; it's noisy enough to be interesting but not yet truly novel. Challenge writing requires novel thinking and current context that LLMs lack. Humans currently are still the best people to write difficult and interesting challenges. I would recommend avoiding using agents for anything beyond the easiest challenges, agent centric challenges, UI/UX, dynamic testing and writing IaC for infrastructure at the moment.

Alright, enough AI.

What we did right

Created a great vibe within the CTF room

CTFs should be social and communication/community reliance are super important skills. Participants are choosing to give up a lot of the benefits of a con by taking part so we want to try to bring them that experience. This year (thanks to our awesome community and sponsors) we had a bunch of prizes. This allowed us to come up with some interesting means of giving out prizes. Aside from the AI confessional we also had a rap battle. We offered 1000 points to the winner of the battle, giving them 45 minutes to come up with a unique rap. This was entirely off the cuff and honestly we did not expect the uptake; something like 8 teams came up and competed. We ended up having a tie for first and gave each team another 15 minutes to come up with some lines for a rap battle.

The finalist teams were both university cybersecurity societies. It was awesome to see the next generation of cybersecurity being so willing to get up in front of the community and have fun. I think that says a lot about the unique community we have in our industry and it's something I and the other organisers want to bring to future cons.

Improved infrastructure maturity

Last year we had a largely click-ops based approach to deploying our CTF infrastructure and I spent days making sure everything was working. This year was entirely different. Underlying infrastructure was handled by Terraform, scheduling services was handled by Nomad and challenge definition files were subject to linting and CI/CD. This wasn't perfect; we had a few little blips where we had to scale up the instances larger than we expected, but it made the process of getting started so much easier. Taking the learnings from this away, I will have a post soon where I officially open source the infrastructure template I've created. My hope is that this will help everyone run better CTFs themselves.

Some changes I want to make include creating traditional test harnesses as well as agentic ones, so that LLMs can have a crack at challenges. This will give us an idea as to whether our challenges are solvable via LLMs and also help spot issues with availability and performance earlier.

Fresh blood in the challenge creator space

I've said it a million times but I am so thankful for our challenge writers. I have an extra pile of thanks for our new challenge writers. You've become part of a strange dysfunctional family that is so much better for you being there. It takes a lot of courage to write a CTF challenge; you're basically asking people to hack something you made. We saw some awesome challenges from new creators that were fresh, vibrant and different.

What we could do better

Start earlier (we try)

My perpetual dream with every CTF is that I wish I'd started earlier. This is a pretty hard ask for our volunteers and creators so it's probably going to stay a wish. There are definitely project management and organisational style bits and bobs we could implement as well.

Expand our challenge base

Lots of great feedback this year about blue team and forensics challenges. These are things we'd love to have more of. Our challenge make up currently reflects our creators, that is very web/offensive sec heavy. Variety is the spice of life. So if you're interested in becoming a challenge writer please reach out to me. There is so much to gain from it and writing challenges is such a great way to validate understanding. We are open to any challenge from anyone and if you're looking for ideas we are also full of those.

Embrace AI

I've said enough about this above. There is more we can do in this space and we will.

Happy hacking and see you next year.